The potential memory access ‘latency’ is masked as long as the GPU has enough computations at hand, keeping it busy.Ī GPU is optimized for data parallel throughput computations. Reason being is that a GPU has more transistors dedicated to computation meaning it cares less how long it takes the retrieve data from memory. Typically, one SM uses a dedicated layer-1 cache and a shared layer-2 cache before pulling data from global GDDR-5 memory. Its architecture is tolerant of memory latency.Ĭompared to a CPU, a GPU works with fewer, and relatively small, memory cache layers. Each SM accommodates a layer-1 instruction cache layer with its associated cores. If we inspect the high-level architecture overview of a GPU (again, strongly depended on make/model), it looks like the nature of a GPU is all about putting available cores to work and it’s less focussed on low latency cache memory access.Ī single GPU device consists of multiple Processor Clusters (PC) that contain multiple Streaming Multiprocessors (SM). Caches sizes range up to 2MB L2 cache per core. The numbers of cores per CPU can go up to 28 or 32 that run up to 2.5 GHz or 3.8 GHz with Turbo mode, depending on make and model. If data is not residing in the cache layers, it will fetch the data from the global DDR-4 memory. The layer-3 cache, or last level cache, is shared across multiple cores. Let’s first take a look at a diagram that shows an generic, memory focussed, modern CPU package ( note: the precise lay-out strongly depends on vendor/model).Ī single CPU package consists of cores that contains separate data and instruction layer-1 caches, supported by the layer-2 cache. A high-level overview of modern CPU architectures indicates it is all about low latency memory access by using significant cache memory layers. Both use the memory constructs of cache layers, memory controller and global memory. Looking at the overall architecture of a CPU and GPU, we can see a lot of similarities between the two. And when we speak of cores in a NVIDIA GPU, we refer to CUDA cores that consists of ALU’s (Arithmetic Logic Unit). However, it is not only about the number of cores. It emphasizes that the main contrast between both is that a GPU has a lot more cores to process a task. The following exemplary diagram shows the ‘core’ count of a CPU and GPU. It does so by being able to parallel process a task. A GPU is all about throughput optimization, allowing to push as many as possible tasks through is internals at once. It’s nature is all about processing tasks in a serialized way. A common CPU is optimized to be as quick as possible to finish a task at a as low as possible latency, while keeping the ability to quickly switch between operations. Let’s first take a look at the main differences between a Central Processing Unit (CPU) and a GPU. But why do we need a GPU for these types of all these workloads? This blogpost will go into the GPU architecture and why they are a good fit for HPC workloads running on vSphere ESXi. healthcare, insurance and financial industry verticals. Calculations on tabular data is also a common exercise in i.e. Using a GPGPU is not only about ML computations that require image recognition anymore. HPC in itself is the platform serving workloads like Machine Learning (ML), Deep Learning (DL), and Artificial Intelligence (AI). Today, GPGPU’s (General Purpose GPU) are the choice of hardware to accelerate computational workloads in modern High Performance Computing (HPC) landscapes. In the consumer market, a GPU is mostly used to accelerate gaming graphics.

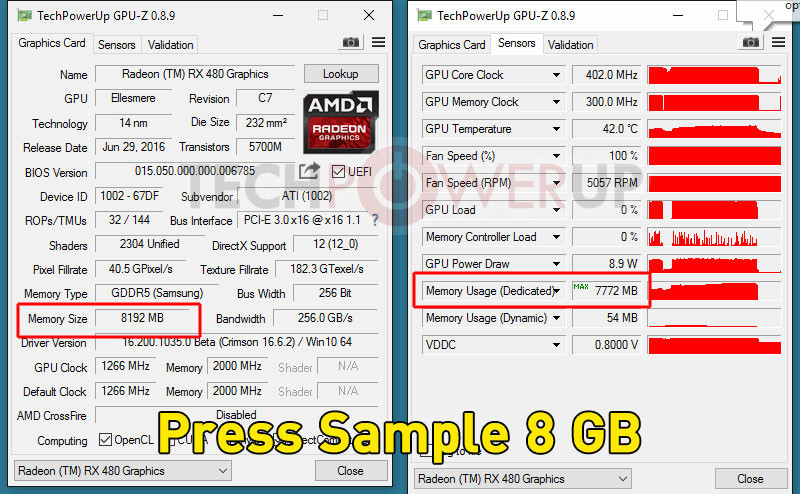

GPU Z MEMORY USAGE DEDICATED VS DYNAMIC SOFTWARE

3D modeling software or VDI infrastructures. A Graphics Processor Unit (GPU) is mostly known for the hardware device used when running applications that weigh heavy on graphics, i.e.